What’s new at CIQ?

We’re a team of scientists, Linux geeks, technologists, pilots, designers and more with a mission to make infrastructure something you never have to think about.

fuzzball run: Immediate access to Fuzzball compute

Before Fuzzball v3.2, running nvidia-smi on a GPU node required 14 lines of YAML (Yet Another Markup Language) and five separate CLI commands. Fuzzball workflows handle multi-stage pipelines with…

AI infrastructure labor: What GPU setup really costs

The AI infrastructure teams we work with consistently report spending 30–50% of their engineering time on infrastructure work: configuring CUDA environments, debugging driver conflicts, rebuilding…

Container workflows in HPC: Integrating Apptainer with cluster management

Containers have changed how researchers package and share computational workflows. But integrating container runtimes with cluster infrastructure isn't automatic: it requires coordination between…

Community Rocky Linux, RLC+, and RLC Pro: Which option fits your infrastructure?

Rocky Linux is an open source, community-driven Enterprise Linux distribution. CIQ, the founding support partner of the project, builds on that foundation with two offerings: RLC+ and RLC Pro. All…

Your cluster management platform should support your choice of hardware

A cluster built today will run workloads for four, five, maybe seven years. The management platform shapes which hardware you can deploy, which schedulers you can run, and how painful it is to adapt…

Weekly newsletter

No spam. Just the latest releases and tips, interesting articles, and exclusive interviews in your inbox every week.

Read about our privacy policy.

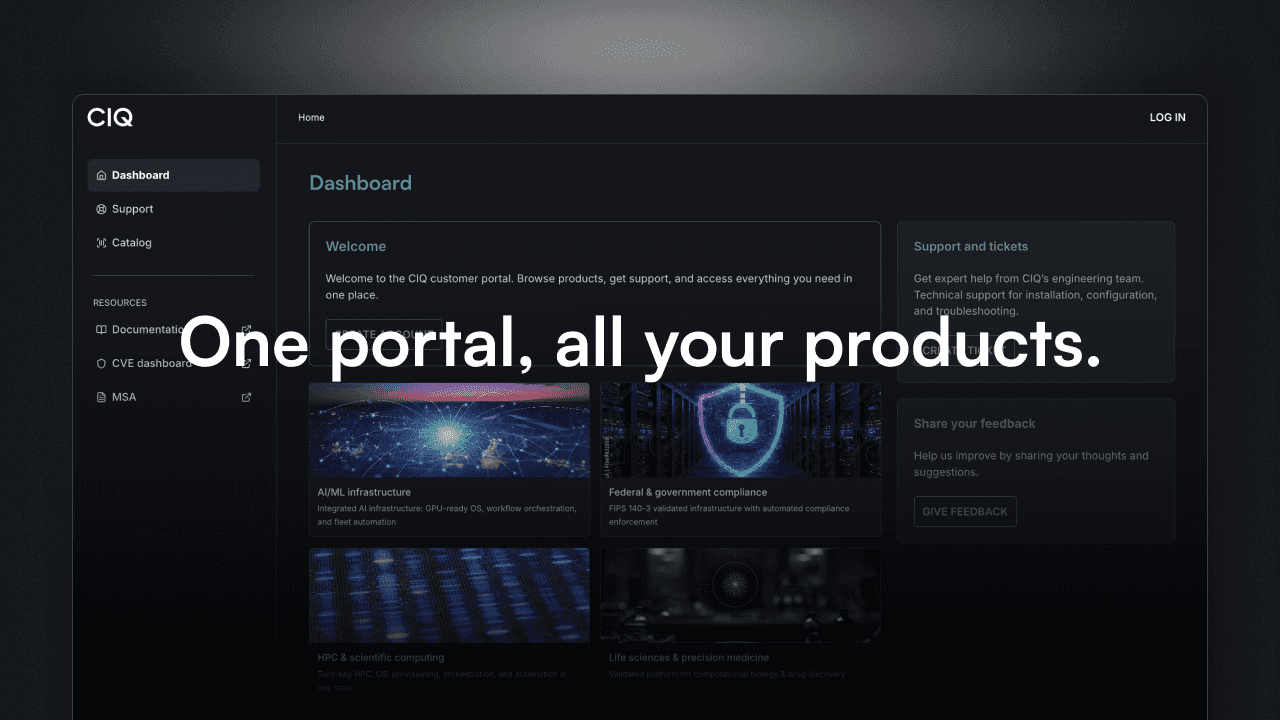

The CIQ portal is live: access, evaluate, and deploy CIQ products, on your own terms

As organizations scale their use of Enterprise Linux for AI, high-performance computing, and production infrastructure, managing software deployments, credentials, and team permissions from a central…

Your Ansible automation runs your company. What happens when it breaks at 2 AM and there's no one to call?

Many organizations start with command-line Ansible or upstream AWX. It works, until it doesn't. There's no audit trail for compliance. And when critical automation fails, you're on your own. Ascender…

Extend GPU hardware life with RLC Pro AI.

The GPU cluster your organization purchased in 2023 sits on the books through 2027 or 2028. The AI software frameworks that determine what those GPUs can actually run have already cycled through…

NVIDIA Dynamo 1.0 can 7x your inference performance. Your OS determines whether you get there.

On March 16, NVIDIA announced Dynamo 1.0. This production-grade, open-source platform functions as the operating system for AI factories, orchestrating GPU and memory resources across clusters to…

Tokens per watt is the new CEO metric. Here's where your OS fits.

Tokens per watt is a new AI efficiency metric, and at GTC 2026, Jensen Huang introduced it as a new method of measuring the value of AI infrastructure. Huang’s framing is simple and pointed: data…

NVIDIA just called the inference era. We built the OS for it.

This week at GTC 2026, Jensen Huang made a statement in front of 30,000 developers in San Jose that many infrastructure teams have already been experiencing in the trenches: training is no longer the…

How to migrate from RHEL to RLC Pro without re-architecting

Migrating from Red Hat Enterprise Linux (RHEL) to Rocky Linux from CIQ Pro (RLC Pro) is straightforward and convenient. RLC Pro delivers production-ready Enterprise Linux binary compatibility. This…